AI is an Educational Accelerant, Not Replacement

I’ve written this blog with the intention of sharing my knowledge. The contents of this blog are my opinions only.

Introduction

Students and teachers I speak to in secondary and tertiary education do not know how to think about Generative AI and ChatGPT.

Some think banning it outright is best because of the potential for mass-scale cheating. Some think students using ChatGPT without restrictions is the best way to prepare for an AI-powered future. Underlying all of this is fear that the education system does not have the tools to educate people for the future.

I personally disagree with all of these framings and think that blindly following any of them could lead to disastrous learning outcomes.

In this article, I aim to explain why these commonly held views will likely be harmful, and propose what I think is a more helpful approach to Generative AI for both students and teachers.

In particular, I propose a perspective based on 3 related principles:

Generative AI is best understood as an educational accelerant, not a replacement for developing traditional educational fundamentals

If left unchecked, ChatGPT and similar tools would polarize educational results (lowering overall educational outcomes while allowing a small, motivated top end to excel)

The top priority for the education system should be to develop skills in areas that LLMs are incapable of addressing:

Researching and verifying information in the pursuit of truth

How to determine one’s own objectives, including a sense of right and wrong

Mathematics

A Primer on ChatGPT and Large Language Models (LLMs)

Before we begin, it is worth having an understanding of how ChatGPT and similar LLMs work.

For those not already familiar with how ChatGPT works and what its major limitations are, I recommend one of my previous articles breaking down the topic.

For those without time to read it or who just want a refresher, LLMs are not infallible machine overlords. LLMs are statistical models with a strong intuitive grasp of human language, and they have 3 notable weaknesses that are inherent to the way they are built as of this writing:

They are not authoritative databases with a tight coupling to reality

They are incapable of defining their own objectives (and similarly are inappropriate tools for distinguishing right from wrong)

They are really, really bad at math

Why Would Banning ChatGPT Be Harmful?

I will start by challenging the first common approach of banning ChatGPT - why should ChatGPT not be banned whole cloth in all educational settings?

Imagine a common sight - a struggling high-school student writing an essay on English Literature. It is not going well. Perhaps they missed some earlier school and fell behind in grammar. Maybe English is not the primary language spoken at home. Or maybe they just naturally struggle with writing essays.

At the moment, if this student wanted help to improve their writing, they would have 4 options:

Approach their already overworked teacher (who may not have time to coach 1:1)

Approach your parents (who will likely not have the knowledge or expertise to help)

Hire a writing tutor for the subject (an expensive and ineffective undertaking for many)

Continue stumbling along, likely performing poorly

Whether it is because of financial constraints, time constraints, a lack of personal connection with the teacher/tutor, social awkwardness around approaching teachers for help, or just because of the sheer friction of the whole process, most of these students end up in the 4th bucket. However, the 4th bucket is where any person interested in public education wants nobody to end up.

ChatGPT provides a solution to this problem. If used properly, ChatGPT could enable this struggling student to improve their grasp of English by identifying grammatical errors, providing corrections and providing clear reasons. ChatGPT could give that student feedback about the logical flow of the document and how they could improve. All of this could be done in private, on demand, and at low-to-no cost to the student.

Furthermore, the benefits of ChatGPT are not just limited to struggling students. Imagine now a curious student who wants to learn about topics not covered in the syllabus or at their school. Now curious, driven students have assistants that can help explain and summarise any topic they want to learn about, whether it is Nuclear Fusion or CRISPR/Cas9.

The potential impact of a 1:1 tutor available to everyone cannot be understated. In 1984, educational psychologist Benjamin Bloom found that students with access to a private tutor perform 2 standard deviations better than students taught in classrooms (see Bloom’s 2 Sigma Problem).

Therefore, completely banning ChatGPT use would be harmful because it would deprive students of a much needed educational tool that can meet a need currently unmet by education systems everywhere.

However, there is a reason I used the qualification “if used properly.”

Why Would Unrestricted Use of ChatGPT Be Harmful?

Now to challenge the second commonly held view of allowing ChatGPT to be used unconstrained - why should ChatGPT not be allowed without restrictions or guidance?

Lets take another view of the struggling student from before. Instead of using ChatGPT to coach them in grammar and slowly improve their abilities, they could just use it to write the essay for them and just hand it in unedited.

If this were allowed, students would often hand in nicer sounding essays. It would also defeat the purpose of education.

Writing is the process by which we realise that we don’t know what we are talking about. Only by writing do you give your thoughts form and allow them to be critiqued and improved. It makes the invisible visible, and it is vital for the development of independent thought.

Struggling students using ChatGPT to skip this process robs them of this development. Not only does our struggling student leave their weaknesses unaddressed, it allows whatever creative muscles they do have to atrophy.

This is not the end of the harm however. Using ChatGPT blindly without understanding its limitations can lead people to depend on it where it is not appropriate.

None of this is mere speculation - both of these patterns were observed in a recent study performed by the BCG Henderson Institute on the effects of using ChatGPT in the workplace.

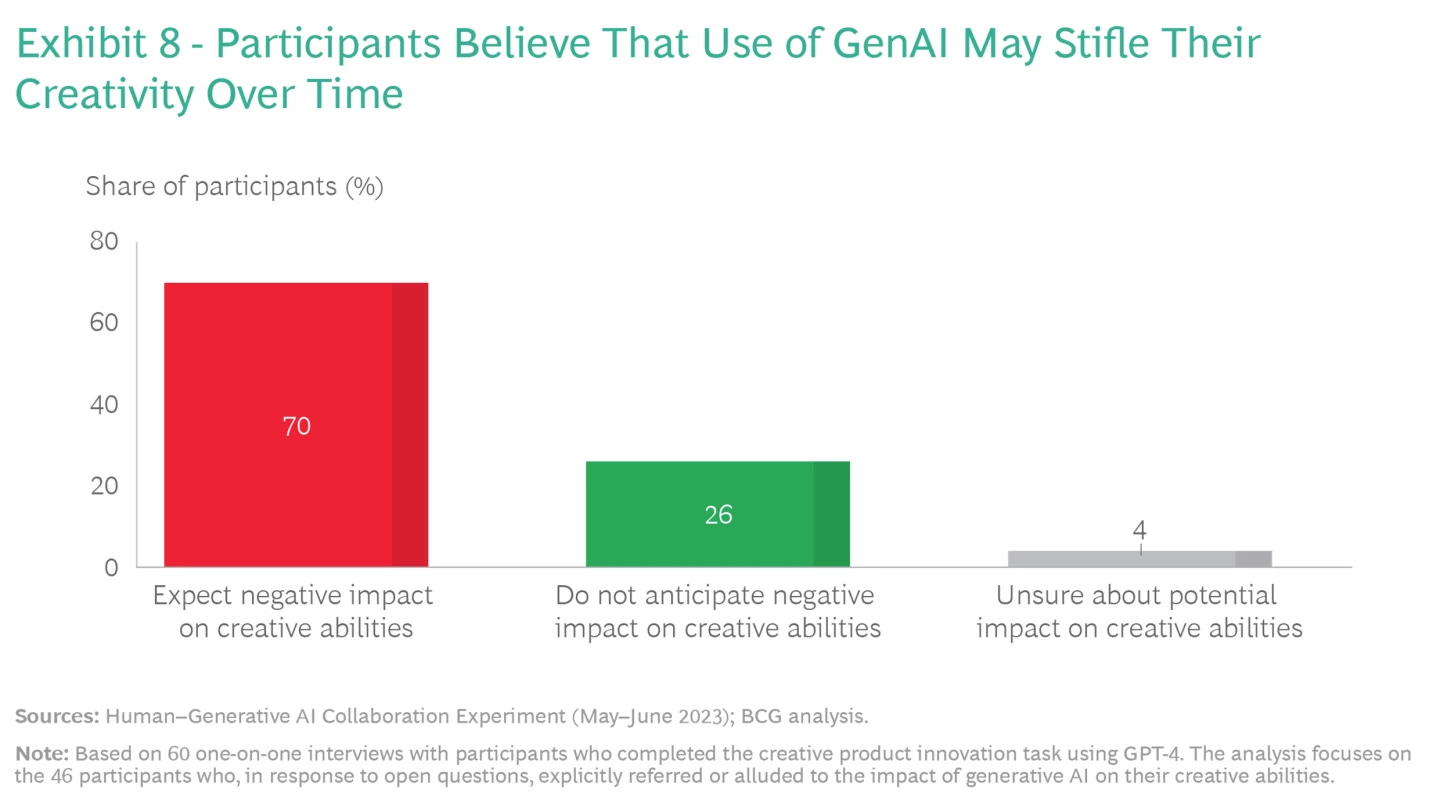

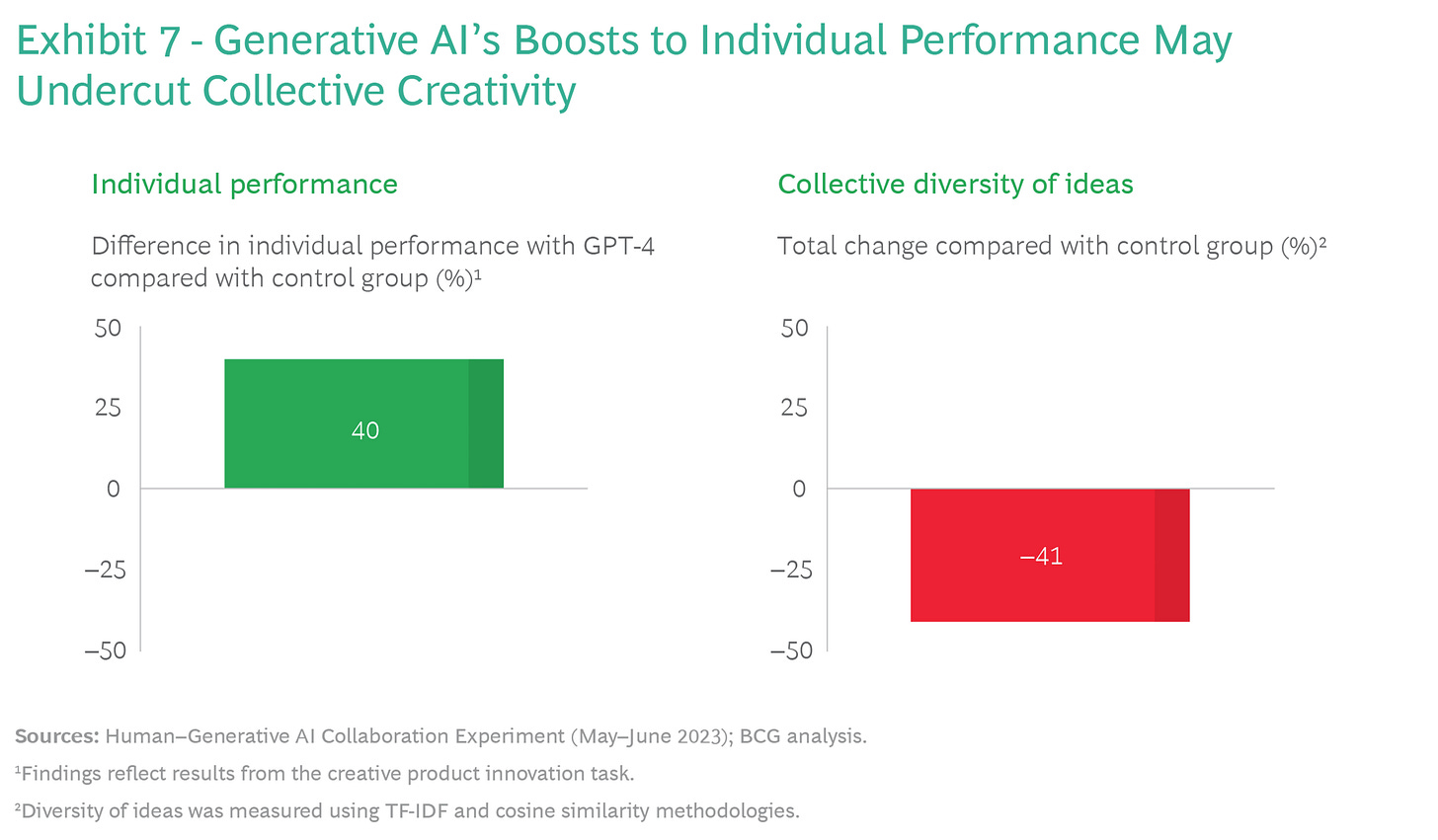

Researchers found that for tasks that ChatGPT is currently good at (e.g. creative ideation), users who leveraged ChatGPT reported feeling their creative abilities atrophy while using it.

Furthermore, while the output of these users seemed better than those who did not use ChatGPT, the diversity of ideas among ChatGPT users was drastically lower than non-ChatGPT users.

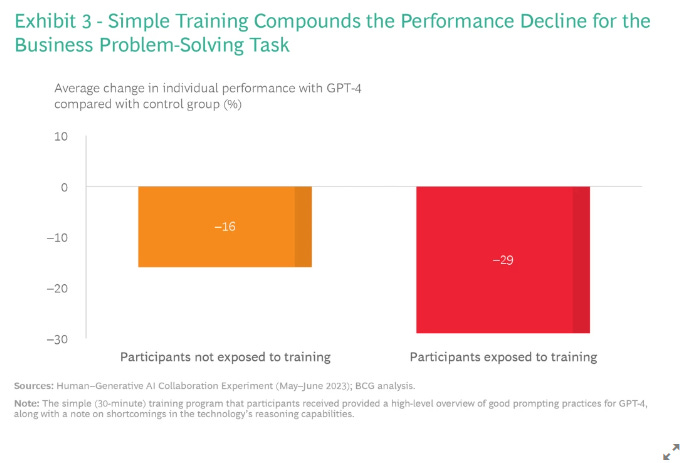

For tasks that ChatGPT is not good at (e.g. business problem solving), users with ChatGPT access often over relied on ChatGPT and performed worse than people without it, even when they were provided education on the limitations of ChatGPT.

ChatGPT - If Left Untreated

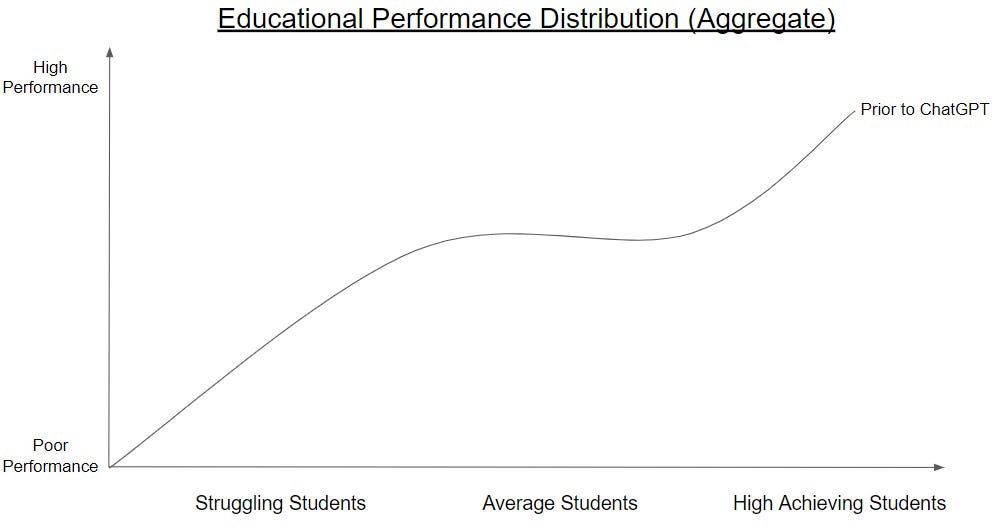

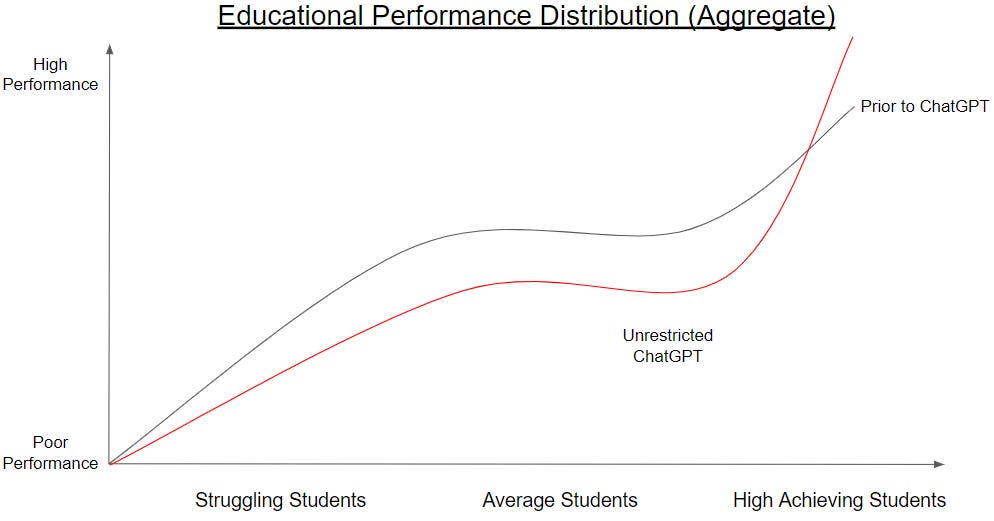

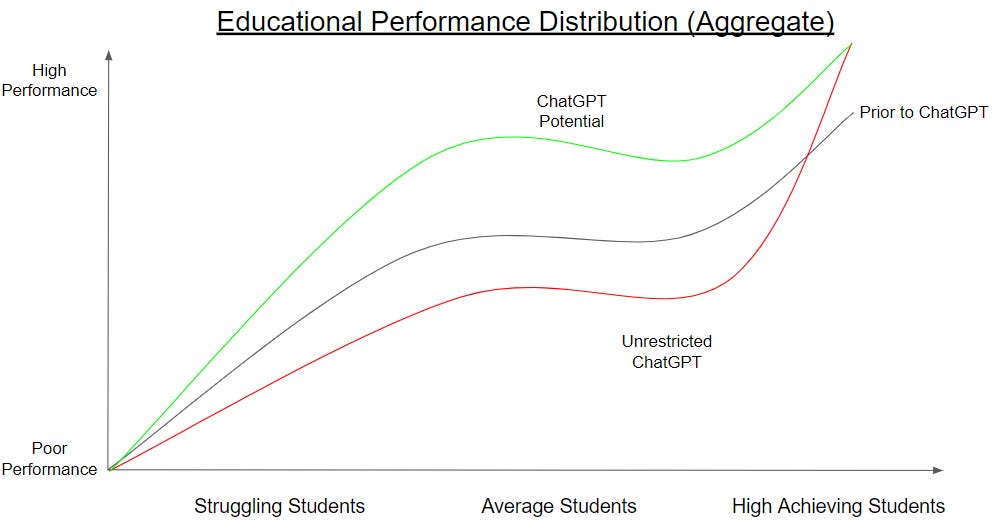

Prior to ChatGPT’s launch, teachers and students would all be familiar with a distribution of student performance that looks something like the following:

A small number of curious, driven students perform well and learn almost no matter what

A small number of students struggle to learn, almost no matter what is thrown at them

Most students live in the middle - not high performing, but not lagging either

If ChatGPT were allowed to take its course with no systematic intervention, I believe outcomes would start to look more like this:

A small number of smart, highly motivated students would leverage ChatGPT as an educational accelerant and personal coach

The average and underperforming students would atrophy academically, as ChatGPT is leveraged as an educational replacement

This kind of polarized outcome is undesirable, as while the top end could perform better, the net amount of learning and educational development would regress across student cohorts.

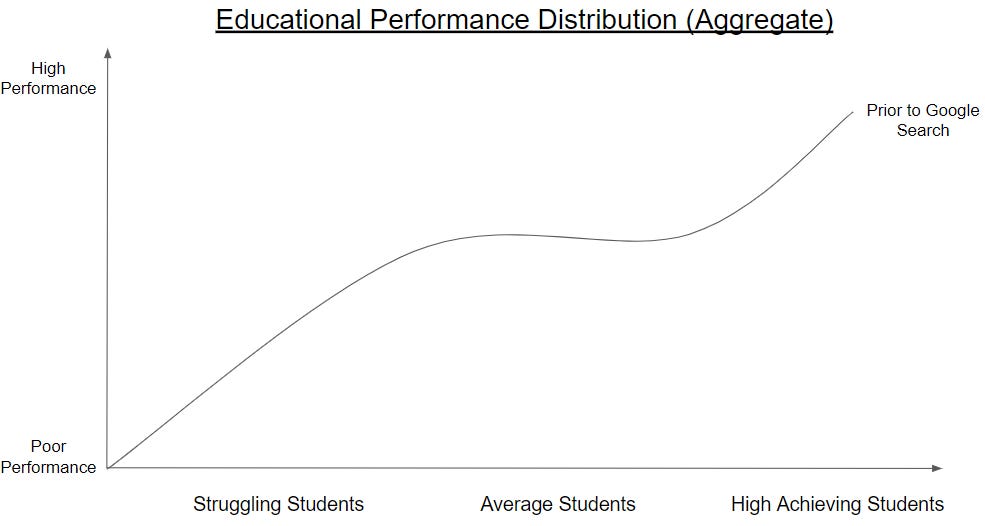

We Have Been Through This Before - Google Search

While ChatGPT looks and feels novel to most of us, I believe it is not the first time many of us have seen a new technology have an educational impact like this.

The proliferation of the internet (particularly Google Search) massively disrupted how students approached studying, particularly when it comes to reading and research.

Before Google, if you were a student and you wanted to do research, you needed to go to a library. Not only that, you need to go to the library and ask to find a book. If the book was rented out you might need to wait a few weeks for someone to return that book. Once you had gathered your books, you needed to read a summary of the book to figure out if it contains the information you are looking for.

In short, it was a labour intense process that yielded a similar pattern to what we saw before:

A small number of dedicated students learned a lot by diving through libraries and developed a personal interest in reading

Some recalcitrant or struggling students never went to the library and thus never absorbed the knowledge contained within them

Most students were in the middle, accessing the library where needed on the occasions they were compelled to or when a Cliff’s Notes was not available

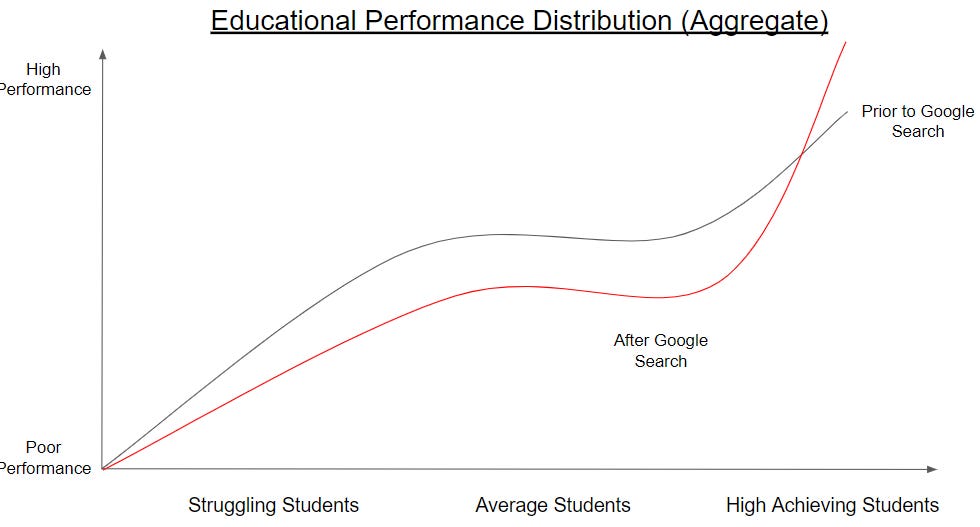

With the rise of Google Search however, this process changed. Suddenly, instead of going to a library, students could go to their computer and get access to most of the information they were looking for instantly.

For high performing students, this enabled them to learn more than generations before them. Instead of spending weeks diving through libraries and all the dead air that entailed, they could access information about whatever they wanted to learn almost instantly. Furthermore, they were not just limited to the knowledge that was contained in their local library. Students who wanted to learn about Complex Numbers or Ancient Roman History could do so at the touch of a button.

However, it also meant that students could instantly get access to sites like Wikipedia, Sparknotes, and many other resources for cheating or reducing the time they spent reading. Furthermore, it gave access to YouTube, memes, and many other distractions.

Thus, we ended up with a pattern that looks like this again, with high performers performing even better and poor and average performers learning less.

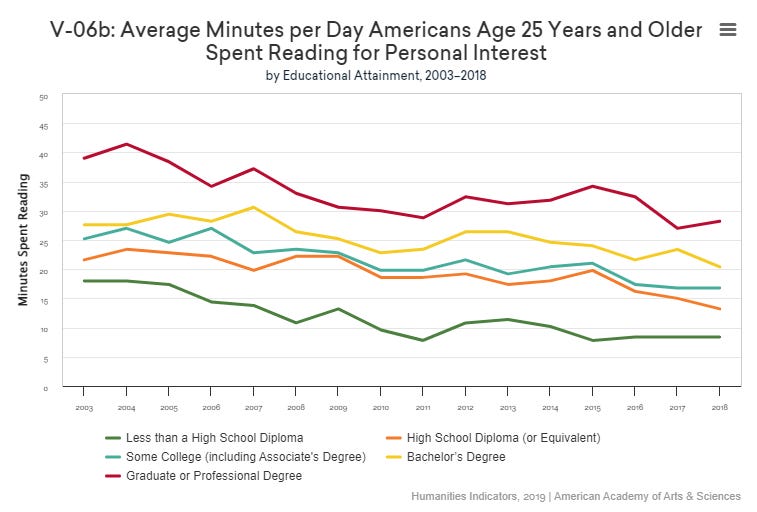

While it is a difficult to get a specific graph that shows aggregate drops in time spent researching and pursuing knowledge, a useful proxy for this is the drop in time spent reading for personal interest seen between 2003-2018 by the American Academy of Arts and Sciences:

Where Do We Go From Here?

Now that we have a conceptual framework for understanding ChatGPT and its likely impacts on education if left unaddressed, how do we harness its potential while minimising downside risk?

I believe there are two broad areas for people to consider:

What subjects and skills students should be learning?

How should we go about teaching them as a system?

Subjects and Skills

Many people I speak to emit a sense of fear over the future of education because they do not know what subjects teachers should teach or what skills students should develop in this new era. Some think we need to throw out old curriculums and invent new AI infused subjects or courses on AI ethics.

I take the opposite view on this - I think that a portfolio of traditional subjects (Math, English, History, etc.) already have the ingredients for developing the skills students need to fill the Big 3 Gaps in Generative AI (the independent pursuit and verification of the truth, the development of a sense of what is right and wrong, and Mathematics).

Furthermore, I think doubling down on traditional and proven approaches to character building are crucial to filling the Big 3 Gaps.

Regarding the portfolio of subjects, exactly which subjects should be offered by schools and selected by students vary, but the following disciplines with the following emphases are a good place to start:

Investigative Journalism - the process of discovering, reasoning about and presenting information not yet uncovered

History - the process of interpreting and evaluating recorded evidence to come independently to a sense of truth, understanding what could or could have not happened, and extrapolating implications for the future about the story of humanity

Persuasive writing - the process by which we realise that we don’t know what we are talking about, wrestling with your own ideas, making invisible beliefs and assumptions visible, and presenting cohesive a point of view out the other end

Philosophy - the rigorous study of the fundamentals of thought, knowledge, reality and humanity

Foreign Languages - the study of other cultures in their own terms and vocabulary

Arts and Crafts - the art of learning to see and display the world through a variety of mediums and disciplines

Mathematics and mathematical sciences - the study of the language of logical reasoning and problem solving (e.g. Physics, Chemistry, Computer Science, Engineering, etc.)

If a student selects a basket of the above subjects and develops a self-sustaining curiosity in them that goes beyond the classroom, that is the definition of success. Good litmus tests of self-sustaining curiosity include reading for personal interest (reading quality books, articles and other high quality media on topics they care about) and independent writing (writing in one’s own time to make sense of themselves and the world).

Regarding character building, it is important to acknowledge that the aforementioned Big 3 Gaps in Generative AI (especially points 1. and 2.) cannot be met by curriculum alone. Being intellectually honest in the pursuit of truth and having a deep sense of right and wrong require levels of emotional maturity and personal character that are often not be developed through assessment alone.

While the ideal methods for developing character and what character traits we should learn to emulate has always been a matter of political contention, few disagree that extracurricular activities (especially sport) are excellent mechanisms for schools to facilitate emotional development. Furthermore, when done well, some form of rigorous argumentation (whether through sports like debating, studies of religion or other philosophical studies) tend to facilitate the development of principled views on the world and what separates right from wrong.

Brass Tacks - How to implement as a system

So, I argue that the content we teach should not change much. But then how should schools to then adapt their teaching and assessment methods to take ChatGPT into account? How do we ensure it acts as an educational accelerant rather than educational replacement?

If we want ChatGPT to accelerate students, that means we need to design classes and assessments that contain the following:

Educate students on ways they can use it as an effective personal tutor (improving phrasing, ask if there are logical inconsistencies or structural weaknesses)

Educate students on the limits of LLMs, and encourage them to not use it in areas where it will produce bad results (e.g. citing sources, business problem solving)

Encourage students to learn to do something themselves first, the slow way (e.g. writing an essay) before they use ChatGPT as a crutch

Furthermore, we must ensure that at no point are students allowed or encouraged to use LLMs to originate content (e.g. writing reports or essays).

While there are many ways to go about this, assessment methods could include the following:

Information Verification - provide information that is either incorrect or biased, and force students to verify the information

E.g. Take a paragraph from an old newspaper or book, get them to find the sources and argue whether the book’s statement was correct or not

Personal Writing - ask students to write about and reflect on personal experiences (i.e. information that an LLM would not have access to) and take a stance on it

ChatGPT Free Assessments - get students to perform assessments in formats where they do not have access to a computer or LLM (e.g. hand-written exams, impromptu assessments, verbal assessments without notes, etc.)

Ask students to synthesise information too big or long to shove into an LLM

At this point in time, LLMs have limits preventing people from inserting blocks of text of unlimited length (i.e. a ‘context limit’)

Furthermore, research has shown that LLM accuracy across models drops when more information is passed into a prompt (e.g. if you pass in 10,000 words it is less likely to generate a correct answer than if it were only passed 1,000)

Therefore, assessments that require ingestion of larger pieces of information can be LLM-resistant

Finally, it is worth accepting that there will be no perfect technical approach to implementing these principles at scale:

Blocking ChatGPT in schools will be of limited usefulness for committed cheaters (as we saw with school filters for gaming websites, if there is a will there is a way to access them)

It may be a while before we get an LLM that acts like a good teacher (i.e. one that refuses to do work for students) for similar reasons (any filter you put in front of an LLM to stop people from cheating will not stop those committed enough to try get around it)

While some groups are working on methods for watermarking LLM outputs (e.g. this approach from the University of Maryland), implementations have yet to yield results in the field and it is not clear how effective they will be in the field

As a result, any implementation of these principles will depend on both school enforcement and cultural acceptance among students of how they should approach assessment for their own long term best interest.

Conclusions

In his public appearances, Amazon CEO Jeff Bezos has famously talked about how Amazon tries to make its biggest bets on things that are likely not to change in the next 10 years, rather than how things will change.

For example, Amazon invested in building Amazon Prime because they believe consumers will always want lower prices, more selection, and faster delivery (which makes sense, I don’t expect many people to start complaining “I loved shopping on Amazon, I just wished you would deliver my packages slower and charge me more for each package”).

While it is tempting to think that because ChatGPT and other LLMs are new and evolving, we need to fundamentally change how we approach education to keep up. However, I think it is instead better to formulate the question in reverse - what has not changed in the era of LLMs, and how can we double down on those things that we already know can work.

Without a fundamental paradigm shift in how LLMs work, none of the fundamental issues I discussed here will be completely resolved. As a result, I think it is safe to bet on the traditional methods and approaches discussed earlier.

I personally do not foresee a future where students and parents go to their teachers and say “thank you for teaching my child, however could you please stop teaching them to be so good at verifying sources? They’ve got an algorithm for determining absolute truth now.”

None of the solutions I propose radically transform how schools or students approach education, and this is intentional. I recommend instead that education systems adapt to LLMs by learning how to use them to accelerate what we already know works, and to work to finally solve Bloom’s 2 Sigma Problem.

Thank you for reading this piece, I hope you found it useful or enlightening in some way.

If you found this helpful, or if you think it was horrible and you need to yell at me about it, let me know at this email: seeking.brevity@gmail.com.

There are topics that came up during writing this that I removed for brevity’s sake. If you have an interest in more pieces like this, let me know as well.